Andrew Ross has announced that all the FOSS4G 2011 and State of the Map videos are now up and available at the FOSSLC web site. Andrew provided the following urls to scan these two categories which gives an extended blurb about each video:

http://www.fosslc.org/drupal/category/event/foss4g2011

http://www.fosslc.org/drupal/category/event/sotm

However I prefer the search mechanism which gives lots more talks per page with just the title.

http://www.fosslc.org/drupal/search/node/foss4g2011

At the risk of being self obsessed I'll highlight two of my talks. I can't seem to find the other two.

I had also really wanted to attend Martin Davis' talk on Spatial Processing Using JEQL but somehow missed it. This weekend I'll review it. There were some other great keynotes and presentations. As you can imagine Paul Ramsey's keynote on Open Source Business Models was informative, amusing and engaging.

The videos were captured by Andrew's team of volunteers, and deployed on the FOSSLC site which includes lots of great videos of talks from other conferences. This has really be a passion of Andrew's for some time and at least in the OSGeo community I don't think the value of it has been fully recognized and exploited. I hope that others will look through the presentation and blog, tweet, +1 and like some that they find the most useful and interesting. Virtual word of mouth is one way to improve the leverage from the work of capturing the videos and the effort to produce the original presentations.

I'd also like to thank Andrew and his helpers Teresa Baldwin, Scott Mitchell, Christine Richard, Alex Trofast, Thanha Ha, "Steve", Paul Deshamps, Assefa Yewondwossen, Nathalie West, Mike Adair and "Ben", many of whom I count as personal friends from the OSGeo Ottawa crowd.

Saturday, November 5, 2011

Monday, June 20, 2011

Joining Google

Today I accepted a job with Google as a GIS Data Engineer. I will be based in Mountain View California at head office, and involved in various sorts of geodata processing though I don't really know the details of my responsibilities yet.

I have received occasional email solicitations from Google recruiters in the past, but hadn't really taken them too seriously. I assumed it was a "wide net" search for job candidates and I wasn't looking for regular employment anyways. I was happy enough in my role of independent consultant. It gave me great flexibility and a quite satisfactory income.

Various things conspired to make me contemplate my options. A friend, Phil Vachon, moved to New York City for a fantastic new job. His description of the compensation package made me realize opportunities might exist that I was missing out on. Also, with separation from my wife last year the need to stay in Canada was reduced. So when a Google recruiter contacted me again this spring I took a moment to look over the job description.

The description was for the role of GIS Data Engineer it was a dead on match for my skill set. So, I thought I would at least investigate a bit, and responded. I didn't hear back for many weeks so I assumed I had been winnowed out already or perhaps the position had already been filled. But a few weeks ago I was contacted, and invited to participate in a phone screening interview. That went well, and among other things my knowledge of GIS file formats, and coordinate systems stood me in good stead. So I was invited to California for in person interviews.

That was a grueling day. Five interviews including lots of "write a program to do this on the black board" sorts of questions. Those interviewing me, mostly engineers in the geo group, seemed to know little of my background and I came away feeling somewhat out of place, and like they were really just looking for an entry level engineer. Luckily Michael Weiss-Malik, spent some time at lunch talking about what his group does and gave me a sense of where I would fit in. This made me more comfortable.

Last week I received and offer and it was quite generous. It certainly put my annual consulting income to shame. Also contact with a couple friends inside Google gave me a sense that there were those advocating on my behalf who did know more about my strengths.

I agonized over the weekend and went back and forth quite a bit on the whole prospect. While the financial offer was very good, I didn't particularly need that. And returning to "regular employment" was not inline with my hopes to travel widely, doing my consulting from a variety of exotic locales as long as they had internet access. But, by salting away money it would make it much easier to pursue such a lifestyle at some point in the future.

I also needed to consult extensively with my family. It was very difficult to leave my kids behind, though it helps to know that as teens they are quite independent, I am also confident that their mother would be right there taking good care of them. My kids, and other family members were all very supportive.

My other big concern was giving up my role in the open source geospatial community. While nothing in the job description prevents me from still being active in the GDAL project, and OSGeo, it is clear the job will consume much of my energy and time. I can't expect to play the role I have played in the past, particularly of enabling use of GDAL in commercial contexts by virtual of being available as a paid resource to support users.

I don't have the whole answer to this, but it is certainly my intention to remain active in GDAL/OGR and in OSGeo (on the board and other committees). One big plus of working at Google is the concept of 20% time. I haven't gotten all the details, but it is roughly allowing me to use 20% of my work time for self directed projects. My hope is to use much of my 20% time to work on GDAL/OGR.

Google does make use of GDAL/OGR for some internal data processing and in products like Google Earth Professional. My original hope had been that my job would at least partly be in support of GDAL and possibly other open source technologies within Google. While things are still a bit vague that does not seem to be immediately the case though I'm optimistic such opportunities might arise in the future. But I think this usage does mean that work on GDAL is a reasonable thing to spend 20% time on.

I also made it clear that I still planned to participate in OSGeo events like the FOSS4G conference. I'm pleased to confirm that I will be attending FOSS4G 2011 in Denver as a Google representative and I am confident this will be possible in future years as well.

The coming months will involve many changes for me, and I certainly have a great deal to learn to make myself effective as an employee of Google. But I am optimistic that this will be a job and work place that still allows me to participate in, and contribute to, the community that I love so much. I also think this will be a great opportunity for me to grow. Writing file translators for 20 years can in some ways become a rut!

I have received occasional email solicitations from Google recruiters in the past, but hadn't really taken them too seriously. I assumed it was a "wide net" search for job candidates and I wasn't looking for regular employment anyways. I was happy enough in my role of independent consultant. It gave me great flexibility and a quite satisfactory income.

Various things conspired to make me contemplate my options. A friend, Phil Vachon, moved to New York City for a fantastic new job. His description of the compensation package made me realize opportunities might exist that I was missing out on. Also, with separation from my wife last year the need to stay in Canada was reduced. So when a Google recruiter contacted me again this spring I took a moment to look over the job description.

The description was for the role of GIS Data Engineer it was a dead on match for my skill set. So, I thought I would at least investigate a bit, and responded. I didn't hear back for many weeks so I assumed I had been winnowed out already or perhaps the position had already been filled. But a few weeks ago I was contacted, and invited to participate in a phone screening interview. That went well, and among other things my knowledge of GIS file formats, and coordinate systems stood me in good stead. So I was invited to California for in person interviews.

That was a grueling day. Five interviews including lots of "write a program to do this on the black board" sorts of questions. Those interviewing me, mostly engineers in the geo group, seemed to know little of my background and I came away feeling somewhat out of place, and like they were really just looking for an entry level engineer. Luckily Michael Weiss-Malik, spent some time at lunch talking about what his group does and gave me a sense of where I would fit in. This made me more comfortable.

Last week I received and offer and it was quite generous. It certainly put my annual consulting income to shame. Also contact with a couple friends inside Google gave me a sense that there were those advocating on my behalf who did know more about my strengths.

I agonized over the weekend and went back and forth quite a bit on the whole prospect. While the financial offer was very good, I didn't particularly need that. And returning to "regular employment" was not inline with my hopes to travel widely, doing my consulting from a variety of exotic locales as long as they had internet access. But, by salting away money it would make it much easier to pursue such a lifestyle at some point in the future.

I also needed to consult extensively with my family. It was very difficult to leave my kids behind, though it helps to know that as teens they are quite independent, I am also confident that their mother would be right there taking good care of them. My kids, and other family members were all very supportive.

My other big concern was giving up my role in the open source geospatial community. While nothing in the job description prevents me from still being active in the GDAL project, and OSGeo, it is clear the job will consume much of my energy and time. I can't expect to play the role I have played in the past, particularly of enabling use of GDAL in commercial contexts by virtual of being available as a paid resource to support users.

I don't have the whole answer to this, but it is certainly my intention to remain active in GDAL/OGR and in OSGeo (on the board and other committees). One big plus of working at Google is the concept of 20% time. I haven't gotten all the details, but it is roughly allowing me to use 20% of my work time for self directed projects. My hope is to use much of my 20% time to work on GDAL/OGR.

Google does make use of GDAL/OGR for some internal data processing and in products like Google Earth Professional. My original hope had been that my job would at least partly be in support of GDAL and possibly other open source technologies within Google. While things are still a bit vague that does not seem to be immediately the case though I'm optimistic such opportunities might arise in the future. But I think this usage does mean that work on GDAL is a reasonable thing to spend 20% time on.

I also made it clear that I still planned to participate in OSGeo events like the FOSS4G conference. I'm pleased to confirm that I will be attending FOSS4G 2011 in Denver as a Google representative and I am confident this will be possible in future years as well.

The coming months will involve many changes for me, and I certainly have a great deal to learn to make myself effective as an employee of Google. But I am optimistic that this will be a job and work place that still allows me to participate in, and contribute to, the community that I love so much. I also think this will be a great opportunity for me to grow. Writing file translators for 20 years can in some ways become a rut!

Friday, February 18, 2011

MapServer TIFF Overview Performance

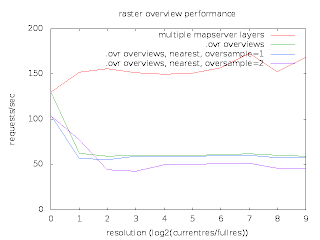

Last week Thomas Bonfort, MapServer polymath contacted me with some surprising performance results he was getting while comparing two ways of handling image overviews with MapServer.

In one case he had a single GeoTIFF file with overviews built by GDAL's gdaladdo utility in a separate .ovr file. Only one MapServer LAYER was used to refer to this image. In the second case he used a distinct TIFF file for each power-of-two overview level, and a distinct LAYER with minscale/maxscale settings for each file. So in the first case it was up to GDAL to select the optimum overview level out of a merged file, while in the second case MapServer decided which overview to use via the layer scale selection logic.

He was seeing performance using the MapServer multi-layer approach being about twice what it was with letting GDAL select the right overview. He prepared a graph and scripts with everything I needed to reproduce his results.

I was able to reproduce similar results at my end, and set to work trying to analyse what was going on. The scripts used apache-bench to run MapServer in it's usual cgi-bin incarnation. This is a typical use case, and in aggregate shows the performance. However, my usual technique for investigating performance bottlenecks is very low-tech. I do a long run demonstrating the issue in gdb, and hit cntl-c frequently, and use "where" to examine what is going on. If the bottleneck is dramatic enough this is usually informative. But I could not apply this to the very very short running cgi-bin processes.

So I set out to establish a similar work load in a single long running MapScript application. In doing so I discovered a few things. First, that there was no way to easily load the OWSRequest parameters from an url except through the QUERY_STRING environment variable. So I extended mapscript/swiginc/owsrequest.i to have a loadParamsFromURL() method. My test script then looked like:

The second thing I learned is that Thomas' recent work to directly use libjpeg and libpng for output in MapServer had not honoured the msIO_ IO redirection mechanism needed for the above. I fixed that too.

This gave me a proces that would run for a while and that I could debug with gdb. A sampling of "what is going on" showed that much of the time was being spent loading TIFF tags from directories - particularly the tile offset and tile size tag values.

The base file used is 130000 x 150000 pixels, and is internally broken up into nearly 900000 256x256 tiles. Any map request would only use a few tiles but the array of pointers to tiles and their sizes for just the base image amounted to approximately 14MB. So in order to get about 100K of imagery out of the files we were also reading at least 14MB of tile index/sizes.

The GDAL overview case was worse than the MapServer overview case because when opening the file GDAL scans through all the overview directories to identify what overviews are available. This means we have to load the tile offset/size values for all the overviews regardless of whether we will use them later. When the offset/size values are read to scan a directory they are subsequently discarded when the next directory is read. So in cases where we need to come back to a particular overview level we still have to reload the offsets and sizes.

For several years I have had some concerns about the efficiency of files with large tile offset/size arrays, and with the cost of jumping back and forth between different overviews with GDAL. This case highlighted the issue. It also suggested an obvious optimization - to avoid loading the tile offset and size values until we actually need them. If we access an image directory (ie overview level) to get general information about it such as the size, but we don't actually need imagery we could completely skip reading the offsets and sizes.

So I set about implementing this in libtiff. It is helpful being a core maintainer of libtiff as well as GDAL and Mapserver. :-) The change was a bit messy, and also it seemed a bit risky due to how libtiff handled directory tags. So I treat the change as as an experimental (default off) build time option controlled by the new libtiff configure option --enable-defer-strile-load.

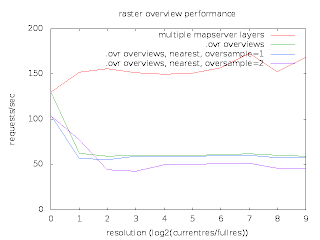

With the change in place, I now get comparable results using the two different overview strategies. Yipee! Pleased with this result I have commited the changes in libtiff CVS head, and pulled them downstream into the "built in" copy of libtiff in GDAL.

However, it occurs to me that there is still an unfortunate amount of work being done to load the tile offset/size vectors when doing full resolution image accesses for MapServer. A format that computed the tile offset and size instead of storing all the offsets and sizes explicitly might do noticeable better for this use case. In fact, Erdas Imagine (with a .ige spill file) is such a format. Perhaps tonight I can compare to that and contemplate a specialized form of uncompressed TIFF files which doesn't need to store and load the tile offset/sizes.

I would like to thank Thomas Bonfort for identifying this issue, and providing such an easy to use set of scripts to reproduce and demonstrate the problem. I would also like to thank MapGears and USACE for supporting my time on this and other MapServer raster problems.

In one case he had a single GeoTIFF file with overviews built by GDAL's gdaladdo utility in a separate .ovr file. Only one MapServer LAYER was used to refer to this image. In the second case he used a distinct TIFF file for each power-of-two overview level, and a distinct LAYER with minscale/maxscale settings for each file. So in the first case it was up to GDAL to select the optimum overview level out of a merged file, while in the second case MapServer decided which overview to use via the layer scale selection logic.

He was seeing performance using the MapServer multi-layer approach being about twice what it was with letting GDAL select the right overview. He prepared a graph and scripts with everything I needed to reproduce his results.

I was able to reproduce similar results at my end, and set to work trying to analyse what was going on. The scripts used apache-bench to run MapServer in it's usual cgi-bin incarnation. This is a typical use case, and in aggregate shows the performance. However, my usual technique for investigating performance bottlenecks is very low-tech. I do a long run demonstrating the issue in gdb, and hit cntl-c frequently, and use "where" to examine what is going on. If the bottleneck is dramatic enough this is usually informative. But I could not apply this to the very very short running cgi-bin processes.

So I set out to establish a similar work load in a single long running MapScript application. In doing so I discovered a few things. First, that there was no way to easily load the OWSRequest parameters from an url except through the QUERY_STRING environment variable. So I extended mapscript/swiginc/owsrequest.i to have a loadParamsFromURL() method. My test script then looked like:

import mapscript

map = mapscript.mapObj( 'cnes.map')

mapscript.msIO_installStdoutToBuffer()

for i in range(1000):

req = mapscript.OWSRequest()

req.loadParamsFromURL( 'LAYERS=truemarble-gdal&FORMAT=image/jpeg&SERVICE=WMS&VERSION=1.1.1&REQUEST=GetMap&STYLES=&EXCEPTIONS=application/vnd.ogc.se_inimage&SRS=EPSG%3A900913&BBOX=1663269.7343875,1203424.5723063,1673053.6740063,1213208.511925&WIDTH=256&HEIGHT=256')

map.OWSDispatch( req )

The second thing I learned is that Thomas' recent work to directly use libjpeg and libpng for output in MapServer had not honoured the msIO_ IO redirection mechanism needed for the above. I fixed that too.

This gave me a proces that would run for a while and that I could debug with gdb. A sampling of "what is going on" showed that much of the time was being spent loading TIFF tags from directories - particularly the tile offset and tile size tag values.

The base file used is 130000 x 150000 pixels, and is internally broken up into nearly 900000 256x256 tiles. Any map request would only use a few tiles but the array of pointers to tiles and their sizes for just the base image amounted to approximately 14MB. So in order to get about 100K of imagery out of the files we were also reading at least 14MB of tile index/sizes.

The GDAL overview case was worse than the MapServer overview case because when opening the file GDAL scans through all the overview directories to identify what overviews are available. This means we have to load the tile offset/size values for all the overviews regardless of whether we will use them later. When the offset/size values are read to scan a directory they are subsequently discarded when the next directory is read. So in cases where we need to come back to a particular overview level we still have to reload the offsets and sizes.

For several years I have had some concerns about the efficiency of files with large tile offset/size arrays, and with the cost of jumping back and forth between different overviews with GDAL. This case highlighted the issue. It also suggested an obvious optimization - to avoid loading the tile offset and size values until we actually need them. If we access an image directory (ie overview level) to get general information about it such as the size, but we don't actually need imagery we could completely skip reading the offsets and sizes.

So I set about implementing this in libtiff. It is helpful being a core maintainer of libtiff as well as GDAL and Mapserver. :-) The change was a bit messy, and also it seemed a bit risky due to how libtiff handled directory tags. So I treat the change as as an experimental (default off) build time option controlled by the new libtiff configure option --enable-defer-strile-load.

With the change in place, I now get comparable results using the two different overview strategies. Yipee! Pleased with this result I have commited the changes in libtiff CVS head, and pulled them downstream into the "built in" copy of libtiff in GDAL.

However, it occurs to me that there is still an unfortunate amount of work being done to load the tile offset/size vectors when doing full resolution image accesses for MapServer. A format that computed the tile offset and size instead of storing all the offsets and sizes explicitly might do noticeable better for this use case. In fact, Erdas Imagine (with a .ige spill file) is such a format. Perhaps tonight I can compare to that and contemplate a specialized form of uncompressed TIFF files which doesn't need to store and load the tile offset/sizes.

I would like to thank Thomas Bonfort for identifying this issue, and providing such an easy to use set of scripts to reproduce and demonstrate the problem. I would also like to thank MapGears and USACE for supporting my time on this and other MapServer raster problems.

Subscribe to:

Posts (Atom)